This post will cover my discoveries about the difference between deferred rendering and forward rendering in Unity and how alpha transparencies forced me to learn a thing or two, plus some screen shots of where the sunshine observation deck is at. Things are nearing the time when I'll release it to the community as I'm itching to start another idea I had. It's a bit technical, but there's nice pictures at the end.

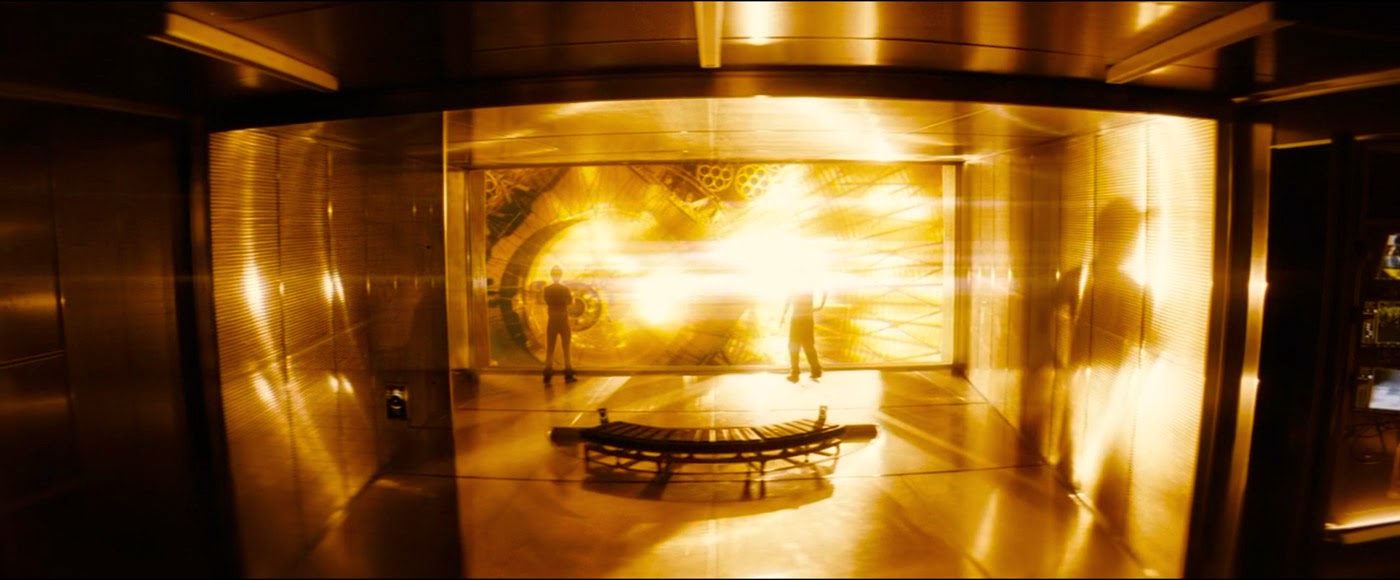

In the last post I talked about physically-based rendering and cubic environment maps, shortly after which I needed to create the transparent, alpha-mapped smoked-glass window you can see in the frame grabs from the movie below:

|

| Smoked-glass sliding door screen-left. |

|

| More smoked-glass, this time from inside the science lab. |

I happily proceeded to create the sliding door geo, apply UnityPro's refractive shader and watch my frame rate plunge down to sub 60fps as I approach the glass.

Oh noes!!! The horror! I resigned myself to the fact that I probably couldn't afford this cool looking effect with my GPU [nVidia GTX 680MX] and swapped the Pro refraction shader for a plain alpha-mapped transparency shader. So sad. However, I remembered that for achieving a sense of presence, Oculus recommend a high frame rate over visual fidelity.

|

| So money. I must have it at all costs. |

There are two main problems and the answer lies in the order in which the objects I'm asking Unity to draw are rendered. I risk stuffing both feet in my mouth trying to explain this as I do not have OpenGL coding experience nor a computer science background, but here goes a quick summary. And here's a link to a great page describing both forward and deferred rendering modes if you'd like more info.

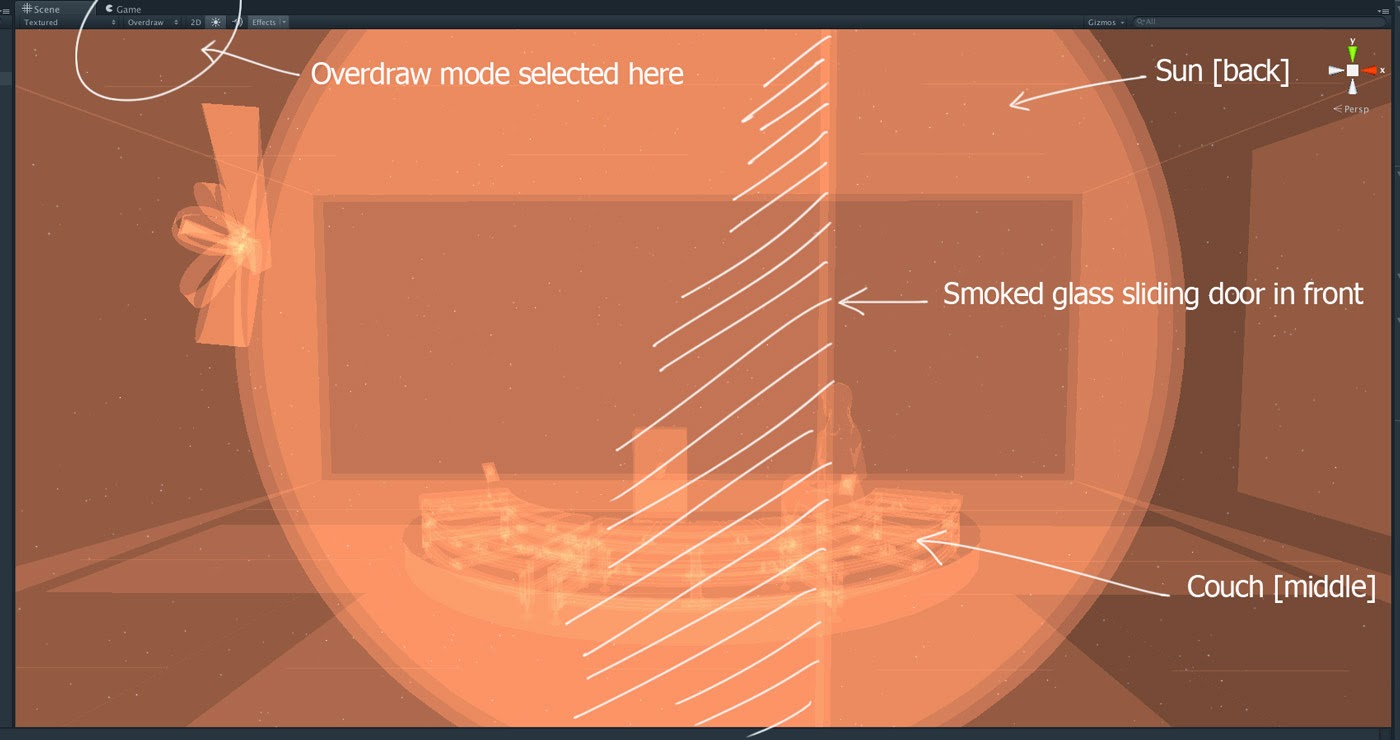

To draw the current frame, the GPU usually attempts to draw the objects in the scene from the furthest object visible all the way up towards the front, or what is nearest to the camera. This is good because objects are sort of stacked logically and things appear in the correct order depth-wise, but this is also bad because things that might be hidden by other things are drawn unnecessarily, wasting GPU resources. This is the first problem. This unnecessary drawing is called 'overdraw' and in fact Unity has a viewport mode dedicated to showing you what's hiding behind other things:

|

| Unity's Overdraw viewport mode - How to know what is transparent and what is not though? |

The second part of the problem is that I'd asked Unity to draw in deferred rendering mode, where the geometry is processed in multiple passes to separate the jobs of drawing, lighting, texturing the scenes objects. In that lighting pass - and this is the main advantage of deferred rendering - many many lights can contribute to the scene's illumination cheaply as there's no texturing or other stuff done at that time, hence you can have lots of dynamic lights. But during the remaining texturing and compositing phase of the draw the transparent portions of the foreground objects must be considered when calculating the pixel sample values of the objects behind. And it's this continuous checking and sampling that destroy any speed gains made. The glass and the way it's transparency contributes to the appearance of the objects behind has to be considered at every step. In fact the closer you get to the glass, or the more of your view covered by it, the slower the GPU goes.

Forward rendering however, performs this object drawing from the back up to the front also, but it draws, lights and textures the scenes objects as it goes. Each object is rendered in it's entirety and then the next closest etc etc on and on until right in the front at the last millisecond the transparent glass is drawn over the top and BLAM that frame is done. It's this brute force approach that makes transparent foreground drawing feasible.

Sure enough, switching Unity to forward rendering instantly restored my frame rate, and allowed me to use the refractive shader which looks cool. It's a little over the top as glass on a spaceship this modern probably has no imperfections at all through which the background would be distorted but I think it adds to the ambience. And since my scene is mostly static, I was able to bake any shadow casting lights and extra stuff afforded by deferred rendering into the light-mapping. That's my problem solved!

Phew. If you're still here, congrats. Hope that wasn't too painful. And if I got this wrong, don't hesitate to tell me in the comments. Now for some screen shots of where the observation deck is at.

EDIT: Here's a great read/rant from a graphics programmer about the different rendering styles as well as a discussion on why it's hard to make mirrored surfaces in games: http://www.reddit.com/r/gaming/comments/12j1jn/

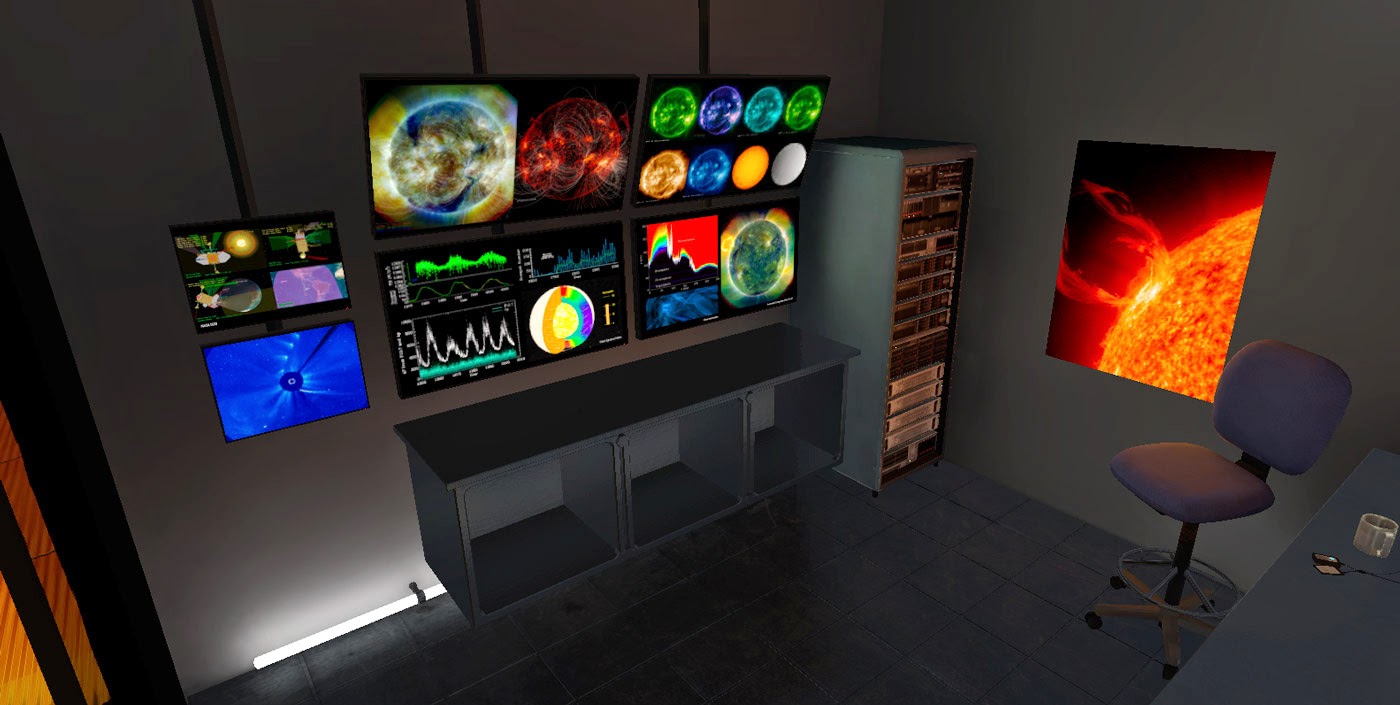

Please bear in mind the following images lack the optical effects present in the Rift's view which assist dramatically in creating the missing atmosphere. But the double imaging of the Rift screen make it tough to see what's there, so this is how you can see it for now:

|

| I found a free, high-quality model of a seated woman online to share the viewing couch. |

|

| Viewing deck exposure controls are present. |

|

| Rear corridor hatch and controls. |

|

| Rear wall normal-mapped panelling. |

|

| Science lab doorway. |

|

| Ergonomic chair. On a spaceship. Of *course* it's ergonomic. Who'd send an un-ergonomic chair into space!? |

|

| Monitor bank, and [how exciting] a server rack. Blinking lights. |

Till next time!

-j

Hey dad I'm going to watch your video for this!!!! You are sooo cool! From Jamie

ReplyDeleteThanks Jamie! You might be my biggest fan ;-)

Delete-dad

Hey, dad. These problems are compounded if you try to view them in VR. It's pretty obvious that we have to use forward rendering until the resolution increases

ReplyDeletePerhaps one of the best half, it’s easy to access customer help features, including the dependable 24/7 reside chat. So relaxation assured, everytime you want some help or information, might get} it in a matter of 파라오 카지노 a few minutes. Sadly, there are no sports betting options out there right here, which is a slight draw back.

ReplyDelete